In this blog, we will analyze the significant players in the food delivery segment in Germany.

Lieferando is the leading food delivery aggregator mediator between the restaurant and the customer. Customers can select between various cuisines, restaurants, prices, and menu items through these aggregators.

To start with, we have to collect data on Lieferando.

There is a pythonic technique to scrape this data; however, we won't get it covered. Let's start by navigating Lieferando's site and submitting the address to delivery locations. We've chosen an address in inner Berlin where there will probably become a higher restaurant availability.

Before refreshing the pages, launch the Chrome Inspector Tool. Go to the Networks tab and search for the Fetch/XHR request, which looks like this:

In the background, the network request is dragging in the JSON file with all restaurants within the range of the typed Home Address. We have just right to click the preview request as well a copy as given below:

Save a JSON file within the text editor.

We will fire up the Jupyter Notebook to see what's within our JSON file.

Let's break the rows down slowly.

The restaurant ID uniquely defines every restaurant as:'''' Every id is associated with many rows describing a restaurant, like its brand, location, cuisine type, voucher acceptance, payment procedures, or delivery polygons.

In some rows, we get nested JSON objects like brand, location, supports, ratings, and shippingInfo. All these objects get 2nd-level JSON data having more data.

You could think about nested JSON objects like a tree in which one branch has many branches till you get the leaves.

What we will do here is filter the rows which we wanted with the given code.

By comparing column length with the full restaurants accessible on Lieferando's main page, we understand there are higher numbers of restaurants within the dataset. By having a ''uport'' row, we can observe that Boolean values for ''deliver'' represent True or False. We believe that we get Falses in our datasets; therefore, restaurants that don't deliver are included, so sellers filter that out.

What we would do here is to the will the ''uports' row as well as convert that row into a Pandas series entity that is a one-dimensional array. The apply technique applies a Pandas series function with every element in a row.

Within our 'delivery_is_true' object, we get all restaurant IDs with a True delivery value. We will utilize this list for filtering my whole dataset. And after that, we would repeat this exercise and crush out a 'location' row.

Now, we have values for city, country, street address, time zone, and coordinates for every restaurant ID.

We would repeat an exercise with rows' shippingInfo' and 'brand.' And inside 'brand,' we want a 'name' column, as well as within 'shippingInfo,' we would only filter columns: 'minOrderValue and 'deliveryFeeDefault.'

Now, we get Delivery Fees as well as Minimum Order Value for every Restaurant ID. These numbers being shown are like an integer. What we want is to convert it into a float.

We would now focus on all the objects processed in one Lieferando dataset.

Fantastic, so we get our complete listing of restaurants, addresses, delivery fees, and minimum order values. If we love to observe how much the share of a complete listing of restaurants is, we would need to turn both 'deliveryFeeDefault' and 'minOrderValue' by the counts of restaurants.

Depending on the given table, we understand that around 70% of the restaurants making deliveries to our addresses have 10 Euros minimum order value, and the delivery fees range from 0-5 Euros- most of which are in 4-5 Euros bucket. One factor getting calculated in delivery fees here is the distance between a restaurant and customers and the timing it would need to deliver.

Seeing that we get the coordinates for all the Lieferando restaurants, we could go one step further to plot it across Berlin.

To do that, we would require to initially convert latitude & longitude columns into a float as well as read a suitable shapefile for Berlin.

In addition, we would add the coordinates given below and show them as the red point.

Good! Now we get all restaurants visualized like orange dots, delivered to the address. Now let's authorize that the further the restaurants are from the customers, the higher the shipping fees would be. We could utilize

earlier insights which, at minimum, 70% of the restaurants delivering are within the range of 0–5 Euros, and make a function for applying any color to a scatter point depending on the delivery fees.

Wonderful! So we've established that the closer a restaurant is to the consumer, the lower the delivery fees would be. There are a few

exceptions where a restaurant is far away outside an inner city; however, the delivery fee is 0-3.99 Euros.

That's it for now! Happy Scraping!

For more information, contact Actowiz Solutions!

Contact us for all your mobile app scraping and web scraping services requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

Australian Top Supermarkets Dataset to analyze pricing, store locations, and consumer trends for smarter retail insights and growth strategies.

Discover how agencies use brand data intelligence for cross-border lead generation. Learn how data-driven insights improve client pitches, targeting, and conversion rates globally.

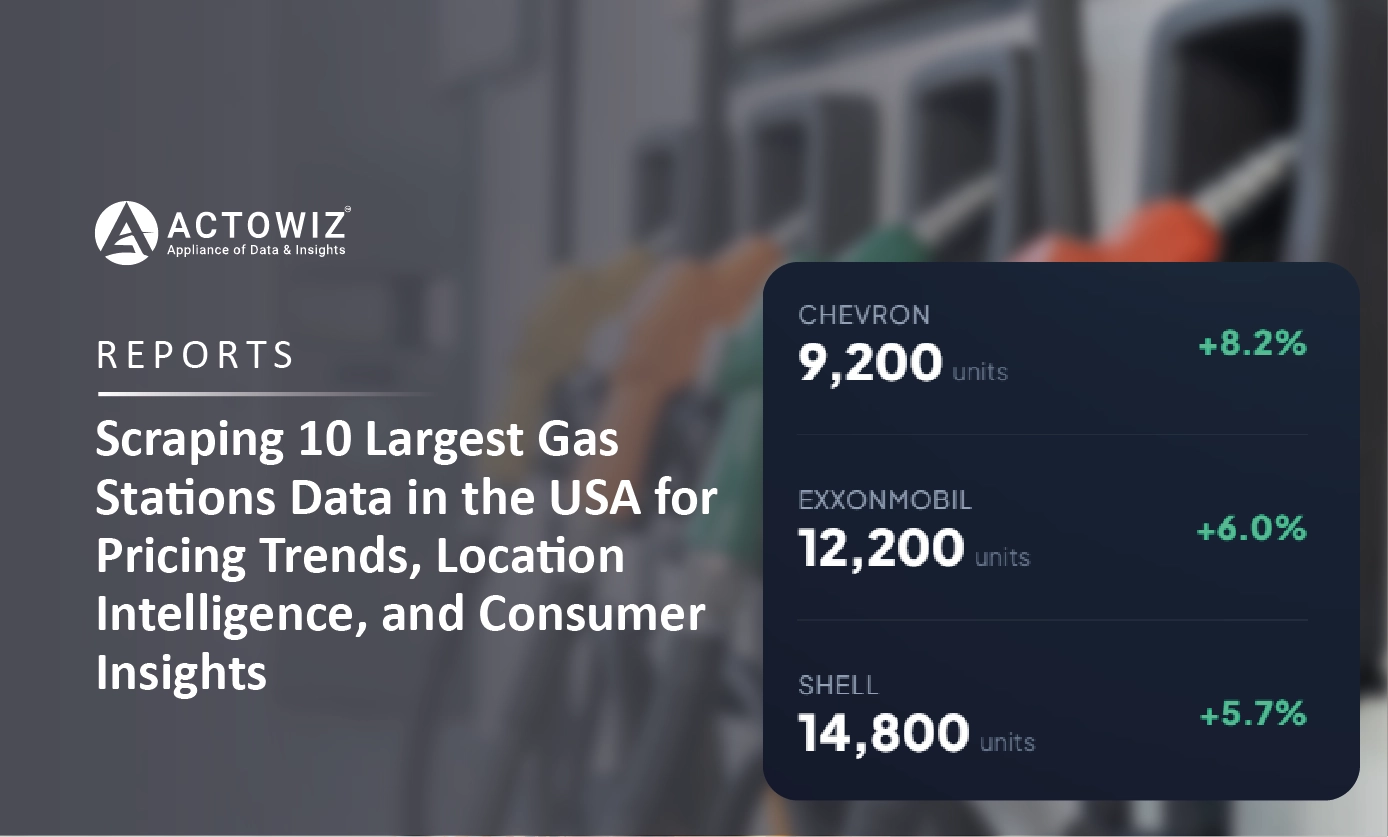

Scraping 10 Largest Gas Stations Data in the USA for pricing insights, location intelligence, and smarter fuel market analytics.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.