In today's fast-paced world, online grocery shopping has become increasingly popular, providing convenience and efficiency to busy individuals. DoorDash, a leading online delivery platform, offers a wide range of grocery items for users to order and have delivered to their doorsteps. However, there may be instances where you want to extract grocery information from DoorDash for various purposes, such as price comparison or data analysis. In this blog, we will explore how to scrape grocery information from DoorDash using Python, allowing you to access and utilize the data in your projects.

Web scraping is the process of extracting data from websites automatically. It involves writing code to retrieve the HTML content of a web page and then parsing that content to extract the desired information. Python provides several powerful libraries, such as BeautifulSoup and requests, that make web scraping relatively straightforward.

DoorDash, on the other hand, is a well-known online delivery platform that connects users with local restaurants, grocery stores, and other retailers. It offers a wide range of products and services, including groceries. DoorDash's grocery section provides users with the convenience of ordering groceries online and having them delivered to their doorstep.

By leveraging web scraping techniques, we can access and extract valuable grocery information from DoorDash. This data can be used for various purposes, such as price comparison, inventory analysis, market research, or building recommendation systems. Scraping grocery information from DoorDash allows us to gather insights and make informed decisions based on the available data.

It's important to note that when scraping data from any website, including DoorDash, you should always review and respect the website's terms of service and scraping policies. Ensure that your scraping activities are within legal and ethical boundaries, and be considerate of the website's resources by implementing appropriate delays between requests to avoid excessive load on their servers.

Before we can start scraping grocery information from DoorDash, we need to set up our Python environment. This involves installing Python, creating a virtual environment, and installing the necessary libraries for web scraping.

First, make sure Python is installed on your system. You can download the latest version of Python from the official Python website (https://www.python.org/downloads/) and follow the installation instructions for your operating system.

Creating a virtual environment is recommended to keep your project dependencies isolated. Open a terminal or command prompt and navigate to the directory where you want to create your project. Then, execute the following commands:

To perform web scraping, we need to install two essential libraries: BeautifulSoup and requests. These libraries provide the necessary tools to fetch web pages and parse HTML content.

In your activated virtual environment, run the following command to install the libraries:

pip install beautifulsoup4 requests

Once the installation is complete, we are ready to move on to the next steps.

It's worth noting that depending on the specific requirements of your web scraping project, you may need to install additional libraries. However, for scraping grocery information from DoorDash, BeautifulSoup and requests should suffice.

In the next section, we will explore how to inspect DoorDash's grocery pages using browser developer tools. Understanding the structure of the web pages will help us identify the data we want to extract.

To scrape grocery information from DoorDash, we need to understand the structure of the web pages containing the desired data. By inspecting the HTML structure of these pages using browser developer tools, we can identify the elements and attributes that hold the information we want to extract.

Follow the steps below to inspect DoorDash's grocery pages:

Open your preferred web browser and go to the DoorDash website (https://www.doordash.com/). If you don't already have an account, you may need to create one.

Once you're logged in, navigate to the grocery section of DoorDash. This is where you can browse and order groceries.

In most modern browsers, you can access the developer tools by right-clicking anywhere on the web page and selecting "Inspect" or "Inspect Element." Alternatively, you can use the browser's menu to find the developer tools option. Each browser has a slightly different way of accessing the developer tools, so refer to the documentation of your specific browser if needed.

The developer tools will open, displaying the HTML structure of the web page. You should see a panel with the HTML code on the left and a preview of the web page on the right.

Use the developer tools to inspect the different elements on the page. Hover over elements in the HTML code to highlight corresponding sections of the web page. This allows you to identify the specific elements that contain the grocery information you're interested in, such as product names, prices, descriptions, and categories.

Click on the elements of interest to view their attributes and the corresponding HTML code. Take note of the class names, IDs, or other attributes that uniquely identify the elements holding the desired information.

In addition to inspecting the HTML structure, you can also examine the network requests made by the web page. Look for requests that retrieve data specifically related to the grocery information. These requests might return JSON or XML responses containing additional data that can be extracted.

By understanding the structure of the web pages and the underlying requests, you'll have a clearer idea of how to extract the grocery information using web scraping techniques.

In the next section, we'll dive into the process of extracting data from DoorDash using Python libraries such as BeautifulSoup and requests.

Now that we have set up our environment and familiarized ourselves with the structure of DoorDash's grocery pages, we can proceed with extracting the desired data using Python libraries. In this section, we will install the necessary libraries and explore how to make HTTP requests to DoorDash's website, as well as parse the HTML content.

Before we can start scraping, we need to ensure that the BeautifulSoup and requests libraries are installed. If you haven't already installed them, run the following command in your activated virtual environment:

pip install beautifulsoup4 requests

To retrieve the HTML content of DoorDash's grocery pages, we will use the requests library to make HTTP GET requests. The BeautifulSoup library will then be used to parse the HTML and extract the desired data.

Open your Python editor or IDE and create a new Python script. Start by importing the necessary libraries:

import requests

from bs4 import BeautifulSoup

Next, we need to make a request to the DoorDash grocery page and retrieve the HTML content. We'll use the requests library for this:

We provide the URL of the DoorDash grocery page and use the get method of the requests library to send the HTTP GET request. The response is stored in the response variable, and we extract the HTML content using the content attribute.

Now that we have the HTML content, we can use BeautifulSoup to parse it and navigate through the HTML structure to extract the desired information. We create a BeautifulSoup object and specify the parser to use (usually the default 'html.parser'):

soup = BeautifulSoup(html_content, 'html.parser')

We now have a BeautifulSoup object, soup, that represents the parsed HTML content. We can use various methods and selectors provided by BeautifulSoup to extract specific elements and their data.

In the next section, we will dive into the process of scraping grocery information from DoorDash, including retrieving grocery categories, extracting product details, and handling pagination to ensure comprehensive data extraction.

In this section, we will delve into the process of scraping grocery information from DoorDash using Python. We'll outline the steps to retrieve grocery categories, extract product details, and handle pagination to ensure comprehensive data extraction.

Before scraping individual product details, let's first retrieve the list of grocery categories available on DoorDash. These categories will serve as a starting point for navigating through the grocery sections.

To extract grocery categories, we can locate the HTML elements that contain the category names and retrieve the text from those elements. Here's an example code snippet:

In the code above, we use the find_all method of the BeautifulSoup object to find all elements with the specified class name ('css-xyid0g' in this case). We then iterate over the found elements and extract the text using the text attribute. You can modify this code to store the category names in a list or a data structure of your choice.

Once we have the list of grocery categories, we can navigate through each category and extract product details such as names, prices, descriptions, and more.

To extract product details, we need to locate the HTML elements that contain the desired information. Inspect the HTML structure of the product listings to identify the relevant elements and their attributes.

Here's an example code snippet that demonstrates how to extract the name and price of each product:

In the code above, we use the find_all method to locate all

You can adapt this code snippet to extract other product details based on the specific HTML structure of DoorDash's grocery pages.

DoorDash's grocery pages often employ pagination to display products across multiple pages. To ensure comprehensive data extraction, we need to handle pagination and scrape data from each page.

To navigate through the pages and extract data, we can utilize the URL parameters that change when moving between pages. By modifying these parameters, we can simulate clicking on the pagination links programmatically.

Here's an example code snippet that demonstrates how to scrape data from multiple pages:

In the code above, we start with the initial page (page number 1) and loop through the pages until there is no "Next" button available. Inside the loop, we make the HTTP request, parse the HTML content, and extract the product details. After that, we check if there is a "Next" button on the page. If not, we break out of the loop.

Remember to adjust the code based on the specific HTML structure and URL parameters used in DoorDash's pagination.

By combining the techniques described above, you can scrape grocery information from DoorDash, including categories, product details, and data from multiple pages.

Web scraping is the automated extraction of data from websites, and Python provides powerful libraries, such as BeautifulSoup and requests, for this purpose. DoorDash is an online delivery platform that offers a wide variety of grocery items from various stores, making it a valuable source of grocery information for scraping.

To begin, you need to set up your Python environment. This involves installing Python, creating a virtual environment, and installing the necessary libraries.

Before scraping, it's crucial to understand the structure of the web pages you'll be extracting data from. We'll explore how to inspect the HTML structure of DoorDash grocery pages using browser developer tools.

In this section, we'll install and utilize the required Python libraries for web scraping, namely BeautifulSoup and requests. We'll cover how to make HTTP requests to DoorDash's website and parse the HTML content.

Here, we'll delve into the process of scraping grocery information from DoorDash. We'll outline how to retrieve grocery categories, extract product details, and handle pagination for comprehensive data extraction.

Once we have successfully scraped the grocery information, we'll explore different methods to store the data for future use. This may include saving it in a CSV file, a database, or even utilizing it directly in your Python code.

Actowz Solutions, with its expertise in web scraping and data extraction, provides a reliable and efficient solution for scraping grocery information from DoorDash. By leveraging their technical prowess and knowledge of Python and web scraping libraries, Actowz Solutions enables businesses to access valuable grocery data from DoorDash's online platform.

Throughout this blog, we have explored the process of scraping grocery information from DoorDash using Python. Actowz Solutions' capabilities in this domain allow businesses to gather insights, perform price comparisons, analyze market trends, and make data-driven decisions in the online grocery landscape.

Actowz Solutions' commitment to delivering high-quality solutions is reflected in their meticulous approach to web scraping. They ensure that the scraping process is carried out responsibly and ethically, respecting the website's terms of service and adhering to legal boundaries. This commitment to ethical scraping practices ensures that businesses using Actowz Solutions' services can confidently extract data without infringing on any policies or regulations.

Furthermore, Actowz Solutions understands the importance of data storage and utilization. They provide businesses with guidance on storing the scraped grocery data securely, whether it be in a CSV file, a database, or any other appropriate storage system. This allows businesses to efficiently utilize the extracted data for various purposes, such as price analysis, inventory management, and market research.

In conclusion, Actowz Solutions is a trusted partner for businesses seeking to scrape grocery information from DoorDash. With their expertise in web scraping, ethical practices, and focus on data utilization, Actowz Solutions empowers businesses to leverage valuable grocery data from DoorDash, enabling them to gain a competitive edge in the online grocery market.

You can also reach us for all your mobile app scraping, instant data scraper, web scraping service requirements.

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

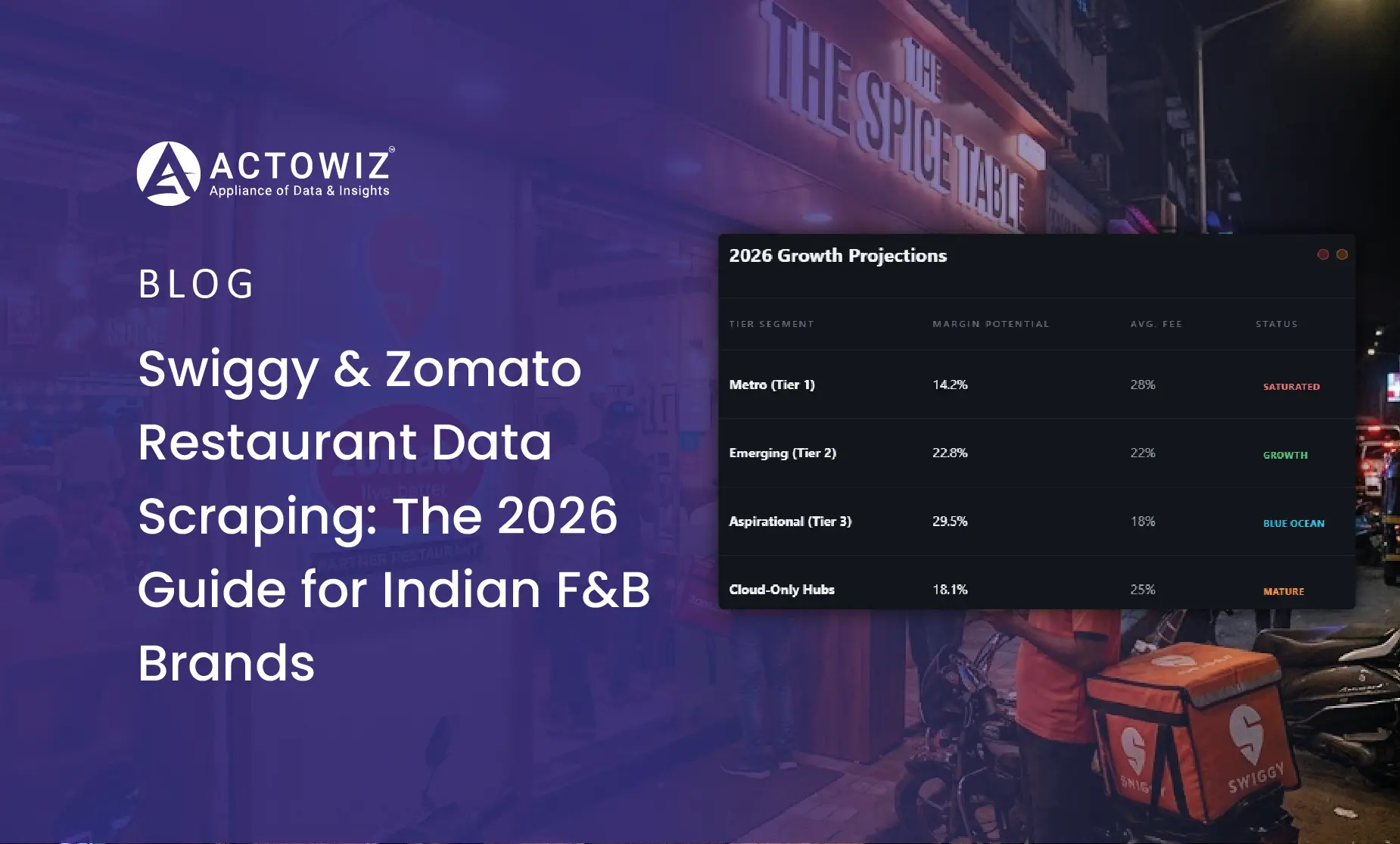

Complete guide to scraping Swiggy and Zomato restaurant menus, pricing, and review data. Built for Indian restaurant chains, cloud kitchens, FMCG HoReCa teams, and food-tech analysts.

Learn how Save Mart increased category revenue by 18% using data-driven assortment planning and local product intelligence. Discover strategies to optimize product mix, meet local demand, and boost retail performance.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.