To achieve the goal of scanning major crypto exchanges like Binance, Kucoin, etc., on various low timeframes (e.g., 1min, 3min, 5min) and identifying historical price movements over a certain percentage, we can implement a web scraping and data processing system. This system will allow users to specify the parameters, such as exchange, coin, time interval, and percentage threshold, and produce an easy-to-understand list in Excel format.

Here's a high-level overview of the steps involved in this process:

User input: Allow the user to input the desired parameters, including the exchange (e.g., KuCoin), coin, time interval (e.g., 1min, 3min, 5min), and the percentage threshold for price movements (e.g., 20%).

Web scraping: Utilize web scraping techniques to fetch historical price data from the specified exchange and coin pair at the selected time intervals.

Data processing: Analyze the historical price data to identify movements exceeding the specified percentage threshold.

Output: Generate an easy-to-understand list with relevant information such as "exchange - coin - date & time of move - movement percent" in Excel format.

Parameter flexibility: Ensure that users can change the parameters easily to scan different exchanges, coins, time intervals, and percentage thresholds.

Note: Keep in mind that web scraping may be subject to the terms of service of the exchanges and requires proper handling to avoid overwhelming their servers with excessive requests.

Implementing such a system may involve multiple Python libraries, such as requests, BeautifulSoup, pandas, and openpyxl (for handling Excel files). Additionally, consider implementing error handling, rate limiting, and authentication (if required by the exchanges).

In this project, we aim to perform web scraping on the crypto.com site to obtain data for the top 500 performing cryptocurrencies. We will then store all the extracted data in a MySQL Database, creating a new table with the timestamp as its name to maintain historical records.

In today's digital age, web scraping has become a crucial skill. It empowers us to extract information from websites, from simple names to valuable data stored in tables. This ability to automate tasks through web scraping is immensely beneficial. For instance, instead of repeatedly visiting a website to check for price reductions, we can streamline the process by scraping the website and setting up an automated email notification when prices drop.

This tutorial will focus on scraping data from the crypto.com/price website to obtain a list of the top 500 performing cryptocurrencies. We can efficiently gather this data for further analysis and decision-making by harnessing the power of web scraping. Let's embark on this journey to explore and leverage the potential of web scraping for extracting valuable information effortlessly.

Before we dive into the project, it's essential to set up a Python virtual environment. A virtual environment ensures that the project's dependencies are isolated from the system-wide Python installation, preventing potential conflicts and maintaining a clean environment.

$ pip install requests bs4 pandas mysql-connector-python

With the modules installed, we are ready to begin the project.

For web scraping in this project, we will utilize two Python modules: requests and Beautiful Soup. The requests module enables us to fetch the HTML code of a webpage, while Beautiful Soup simplifies the process of extracting specific elements from that code.

First, open your web browser and navigate to the website we want to scrape (crypto.com/price). Use the browser's inspect tool to explore and identify the elements we need to extract. In this project, we aim to retrieve data from the first table on the webpage.

Below is an example of how we can extract the first table from the webpage using the requests and Beautiful Soup modules:

You are absolutely right! HTML codes can be complex with nested elements, which may require additional filtering and processing to extract the desired text data accurately.

After executing the provided code, we will get two lists: one for storing the table headings and another for storing the table rows in tuple format. To format this data into a DataFrame and save it as a CSV file, we can use the popular pandas library. Let's update the code accordingly:

To connect the MySQL database to Python, please refer to the code provided below.

Certainly! To make the code more flexible and accommodate scraping data from different websites with distinct table structures, we can take the table name as a variable. This allows us to create a new DataFrame with a user-defined table name to store the scraped data.

Filename format: crypto_%Y%m%d%H%M%S

The code shown above transfers all cryptocurrency data to the database.

That’s it!

Happy Scraping!

If you want more details or want to scrape mobile app scraping, instant data scraper, or web scraping services you can contact Actowiz Solutions anytime!

Our web scraping expertise is relied on by 4,000+ global enterprises including Zomato, Tata Consumer, Subway, and Expedia — helping them turn web data into growth.

Watch how businesses like yours are using Actowiz data to drive growth.

From Zomato to Expedia — see why global leaders trust us with their data.

Backed by automation, data volume, and enterprise-grade scale — we help businesses from startups to Fortune 500s extract competitive insights across the USA, UK, UAE, and beyond.

We partner with agencies, system integrators, and technology platforms to deliver end-to-end solutions across the retail and digital shelf ecosystem.

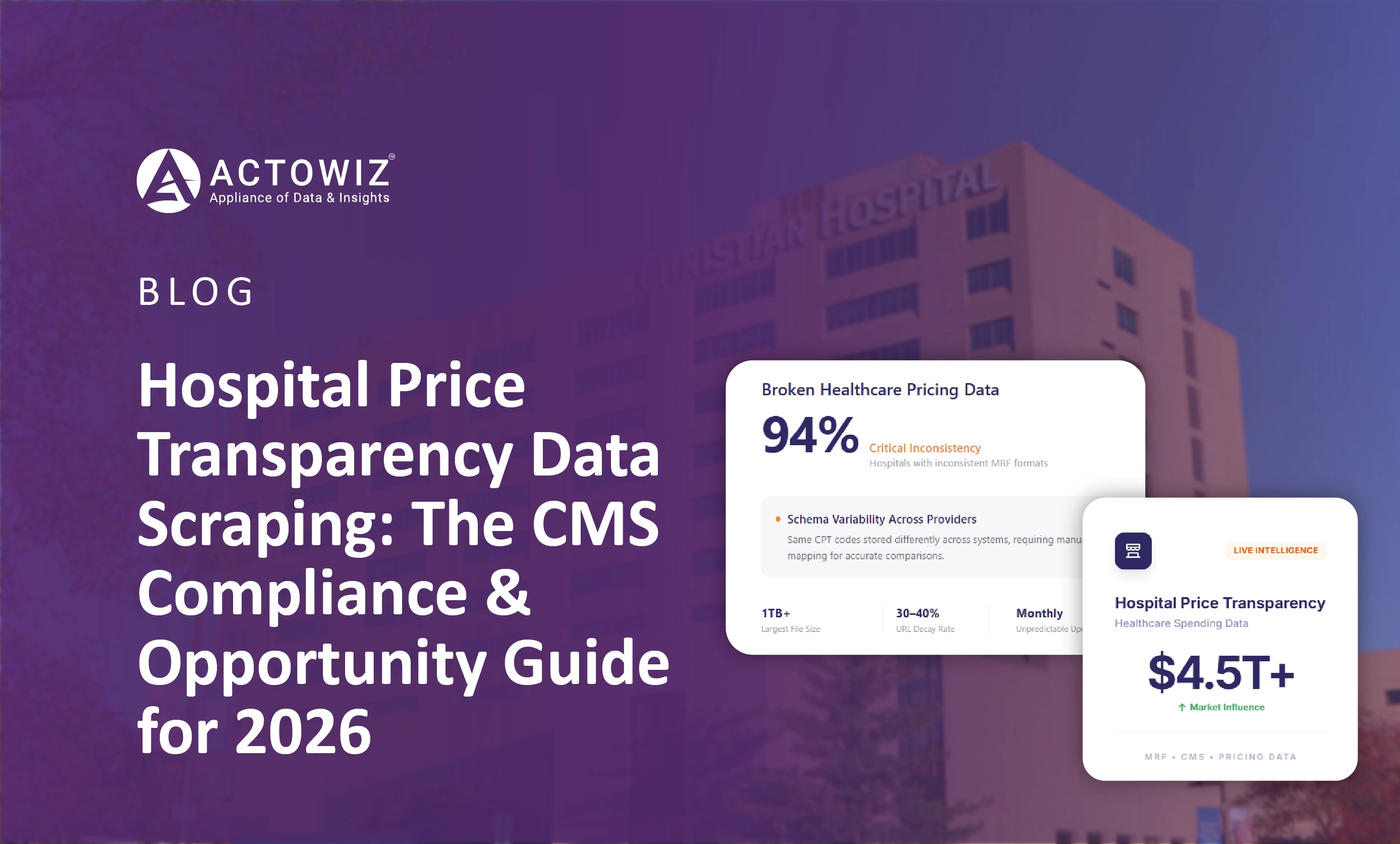

How healthcare payers, startups, and analysts scrape CMS-mandated hospital price transparency files at scale. Complete 2026 guide to MRF extraction and use cases.

Discover how a Dubai cloud kitchen group saved $2.1M annually and scaled to 80+ virtual brands using Talabat and Careem food intelligence. Learn how data-driven insights optimize menus, pricing, and growth.

Track UK Grocery Products Daily Using Automated Data Scraping across Morrisons, Asda, Tesco, Sainsbury’s, Iceland, Co-op, Waitrose, and Ocado for insights.

Whether you're a startup or a Fortune 500 — we have the right plan for your data needs.