Introduction

In this blog, we have discussed informative objectives to learn how to program a web data scraper quickly. With time, the website would change, and its codes won’t work. This blog aims to help you understand how to create a web scraper and regulation so that you can create your own.

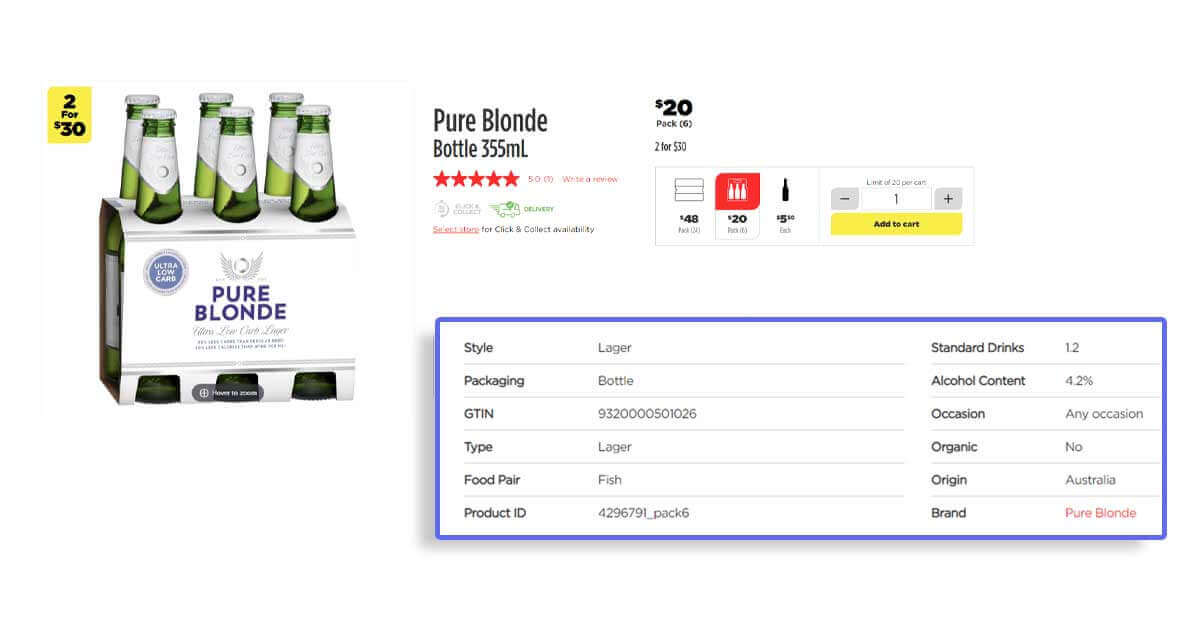

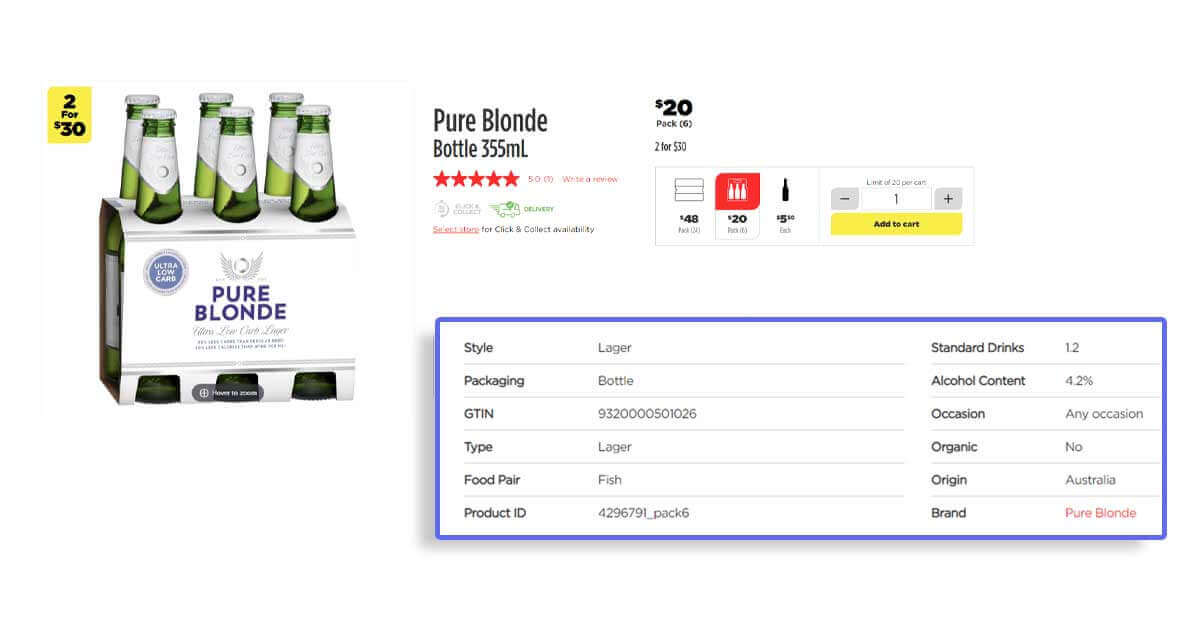

At Actowiz Solutions, we extract the given data fields from wine stores:

- Name

- Prices

- Available Delivery– If liquor can be given to you

- InStock – If liquor is in stock

- Quantity or Size

- URL

We can save data in a CSV or Excel format.

Install All Mandatory Packages for Running Total Wine Store Web Scrapers

For that, we would use Python 3 and its libraries as it could be done with the Cloud, VPS, or a Raspberry Pi. We can use these libraries:

Python Requests to make requests and download HTML content (http://docs.python-requests.org/en/master/user/install/).

Selectorlib to scrape data with a YAML file that we have made from web pages, which we download

The Python Code

To get a complete code used in the blog, contact us at

https://www.actowizsolutions.com/contact-us.php

Let’s create a file called products.py and paste the given Python code in it.

This code will do the given things:

Reads a listing about Total Wines and More URL from the file termed urls.txt (The file will get URLs for TWM products’ pages, which you care like Beer, Scotch, Wines, etc.)

Use a YAML selectorlib file to identify data on Total Wine pages which also gets saved in the file termed selectors.yml

Extract the desired Data

Save data in a CSV format file termed data.csv

Make a YAML file called selectors.yml

Run a Total Wine Scraper

You only need to add the required URL to extract a text file called urls.txt in same folder.

In a urls.txt file, get”

Then, run the scraper having command:

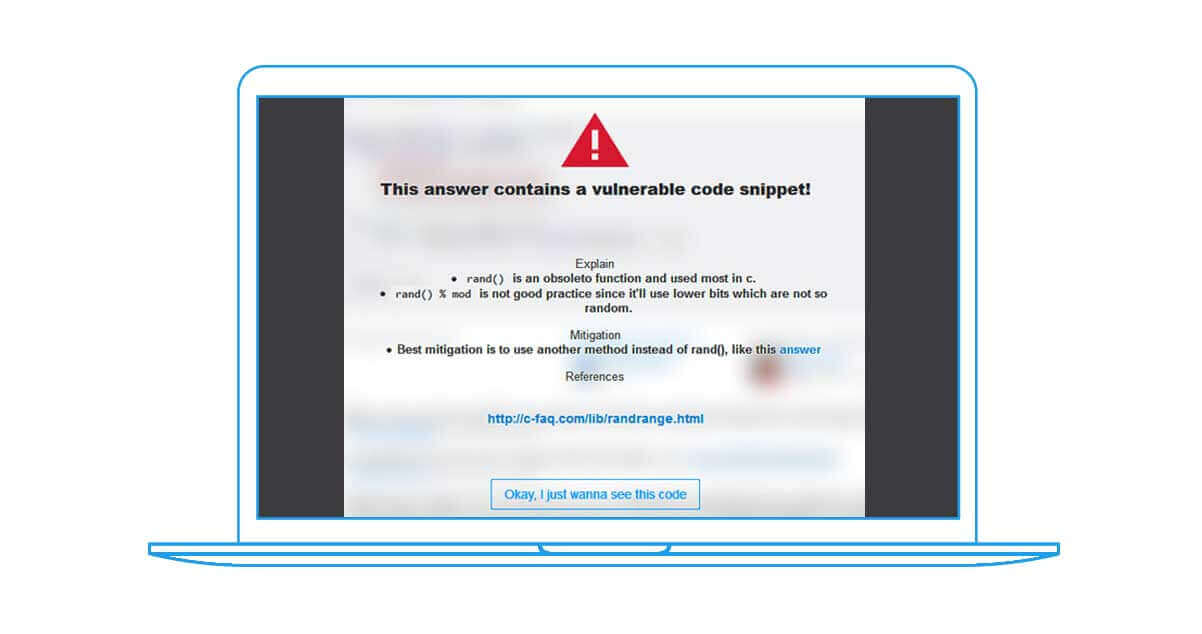

Problems with the Codes and Other Internet Scripts or Self-Services Tools

If any site changes its assembly, e.g., CSS Selectors used for pricing in a file selectors.yaml termed price__1JvDDp_x might change daily.

A location’s selection for a “local” store could depend on variables and not geo-located IPs, and the website could ask to select a location. This is not held in the easier code.

The website could add original data points or change the present data.

The website could block the User-Agent

The website might block a design of using the script uses

Use a YAML selectorlib file to identify data on Total Wine pages which also gets saved in the file termed selectors.yml

The website might block IPs from different proxy providers

That’s why full-services companies like Actowiz Solutions work much better than products, DIY scripts, and self-service tools. One learns this lesson after using any DIY tool or self-services and getting things to break frequently. You might analyze the pricing and brands about your preferred wines.

If you want assistance with challenging data scraping projects, contact Actowiz Solutions, and we would assist you! You can also call us for your mobile app scraping or web scraping services requirements.