In this post, we'll uniquely discuss one of the most attractive cities, Istanbul. It is a

bustling and

vibrant city at the crossroads of Asia and Europe. Considering that it is the largest city in

Turkey,

it is the residence of more than 15 million individuals. The city is also the hub of tourism,

culture, and commerce.

The real estate market has been significantly growing in Istanbul recently, with the market of

rental flats being dynamic. Considering the unique blend of modernity and history, the city is a

leading subject for real estate markets and data analytics.

However, the economy is not up to the mark in Turkey. In the last year, the inflation rate was

around 86 percent. Still, there is instability in the economy of Turkey.

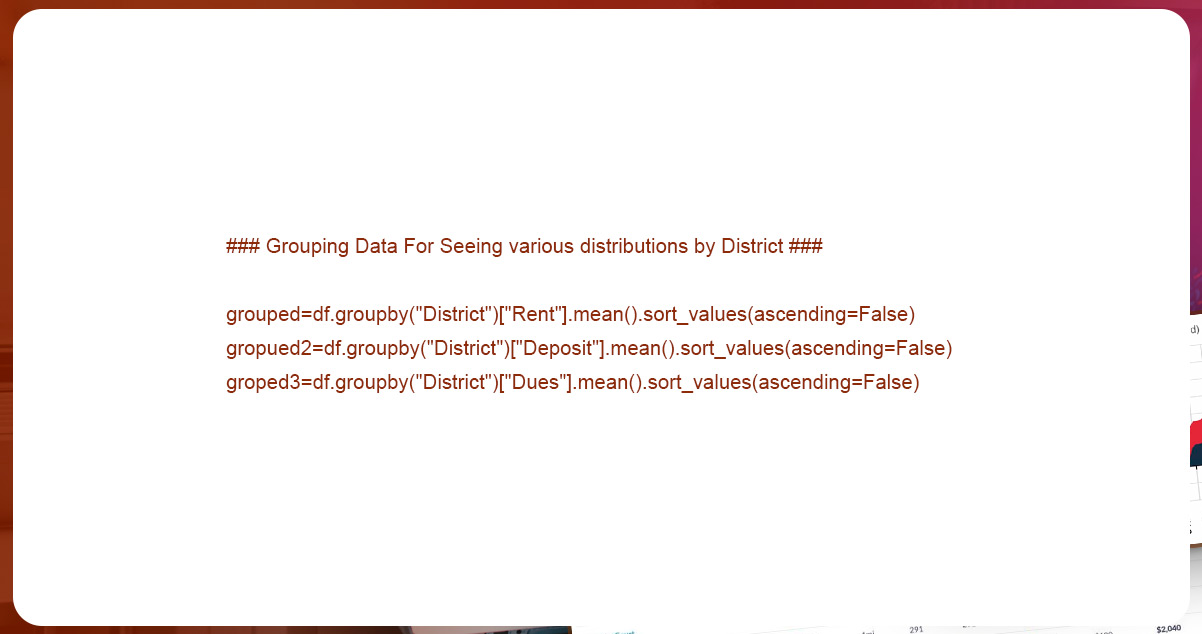

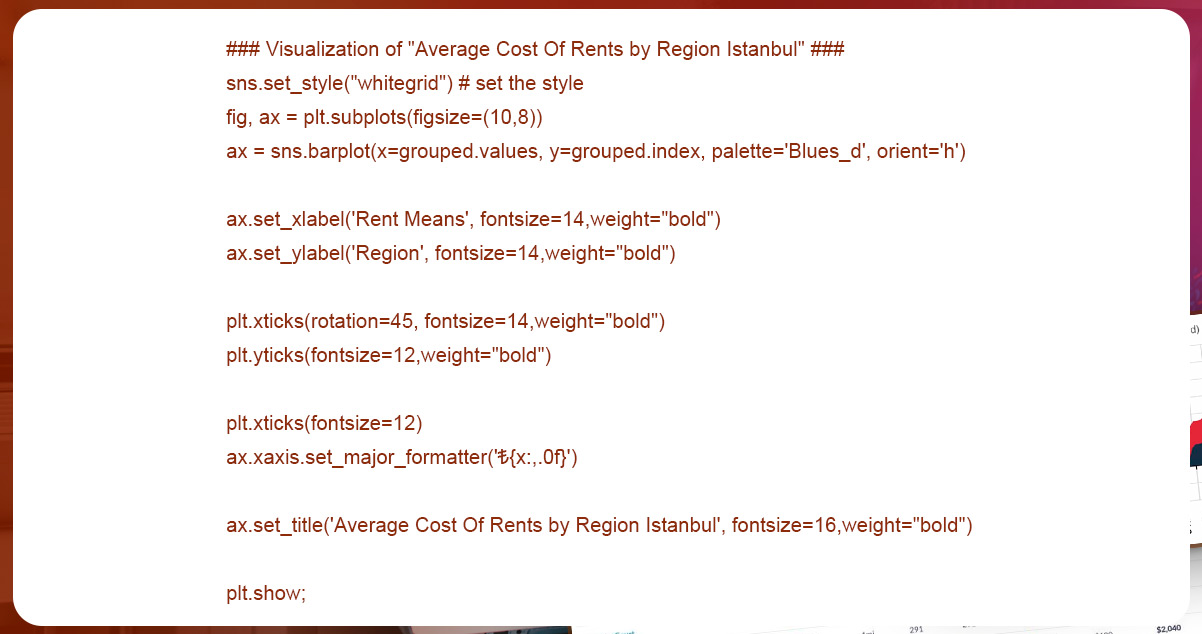

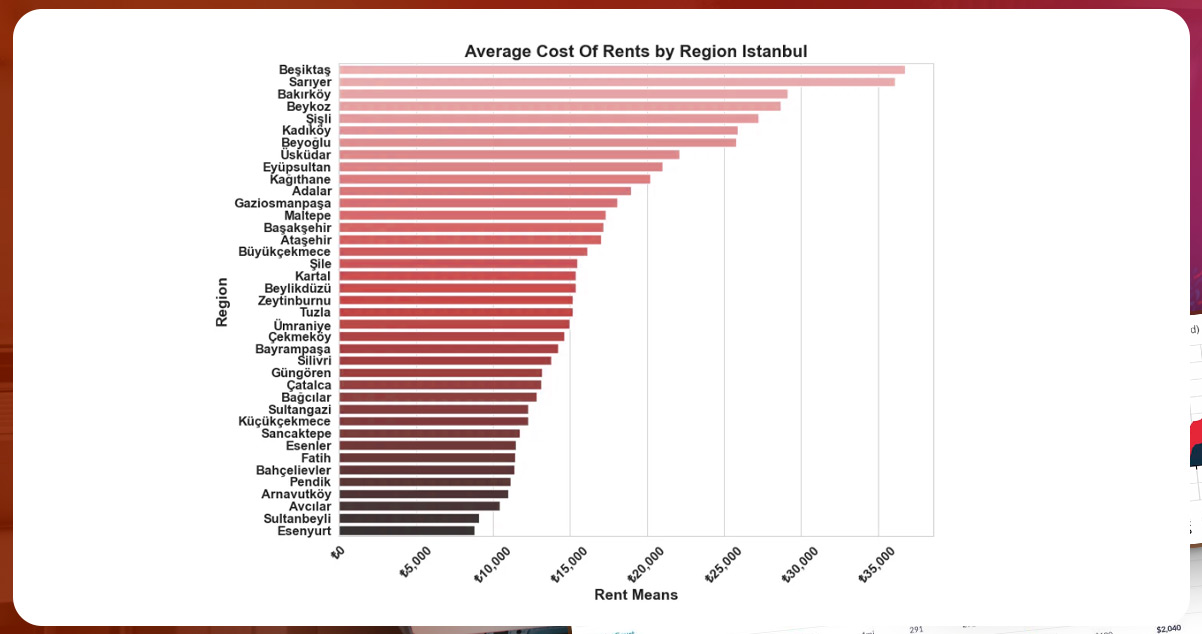

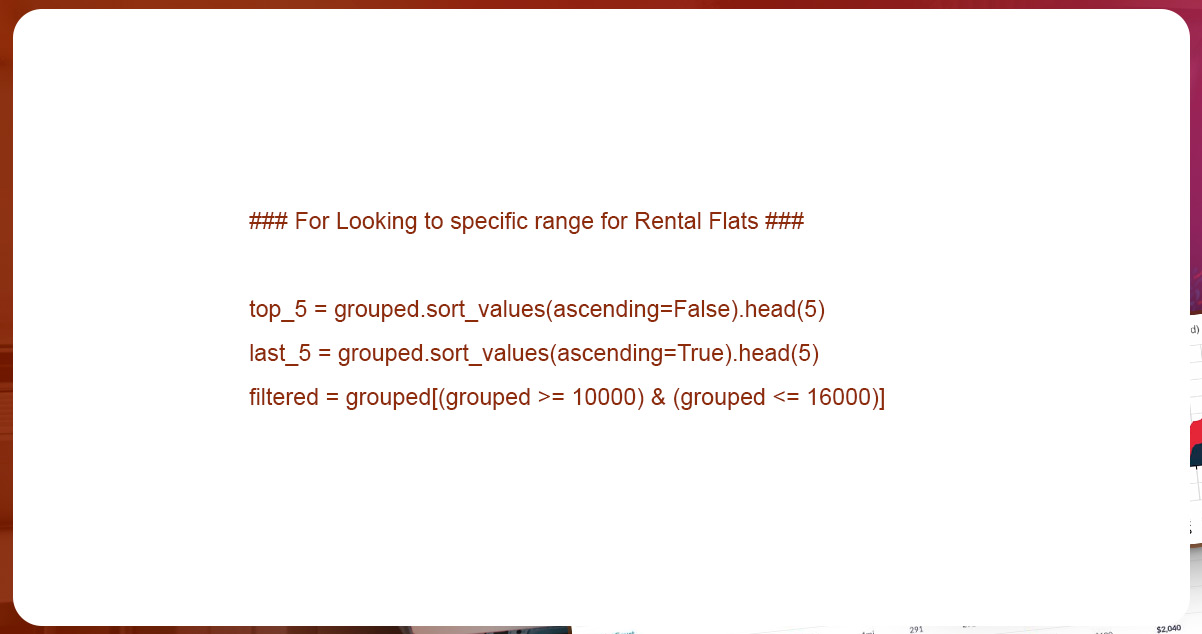

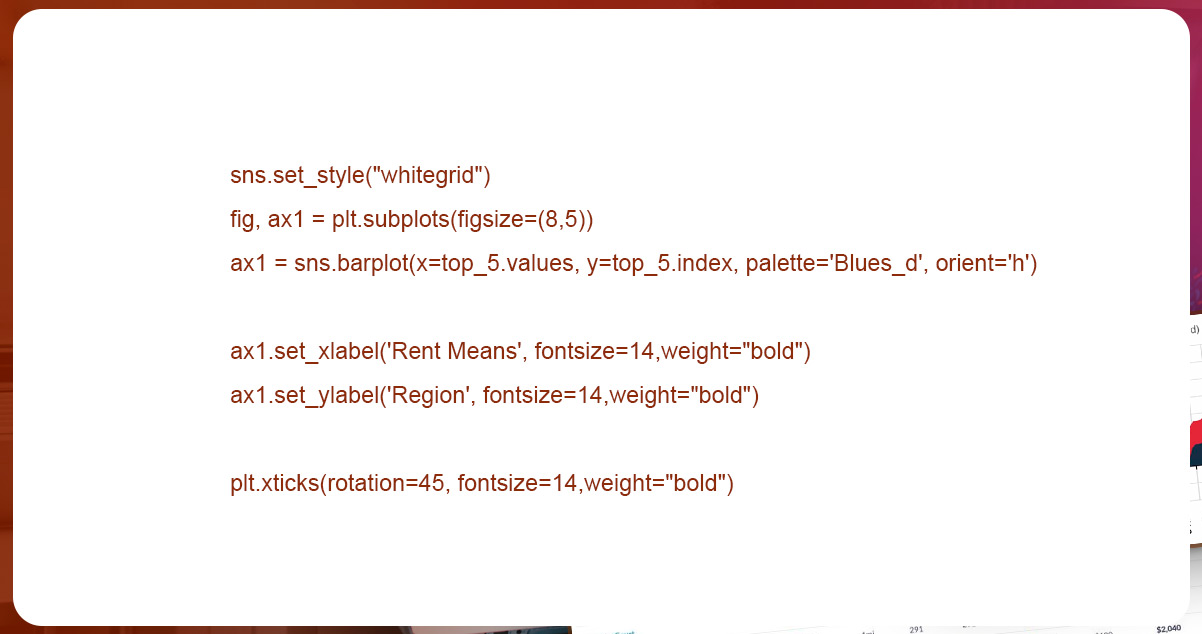

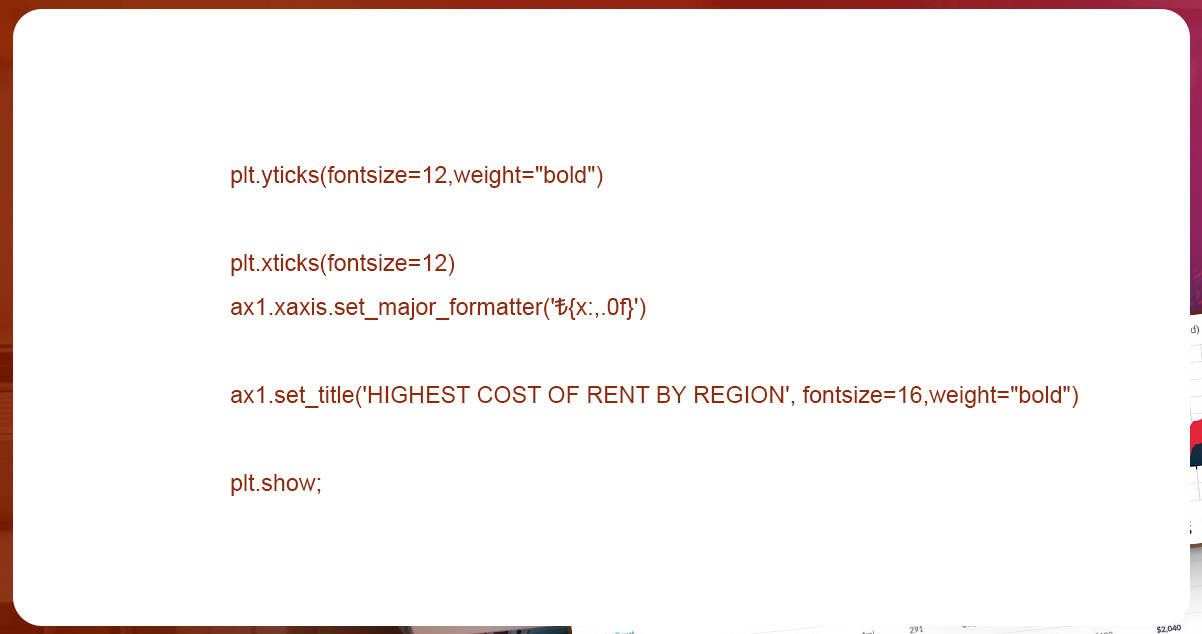

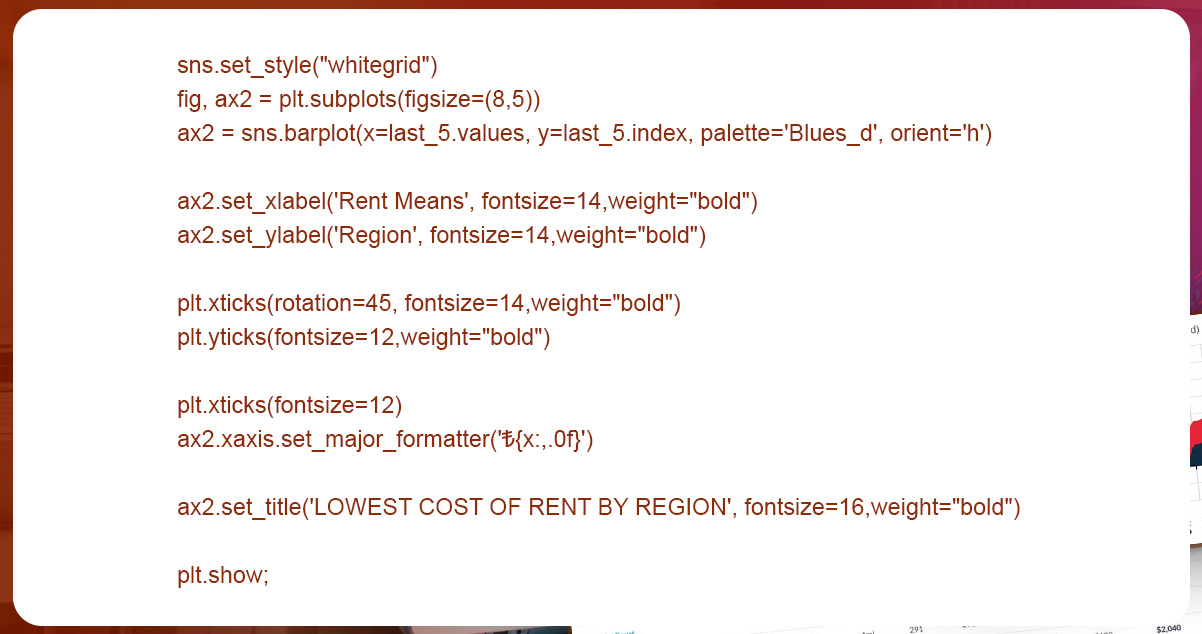

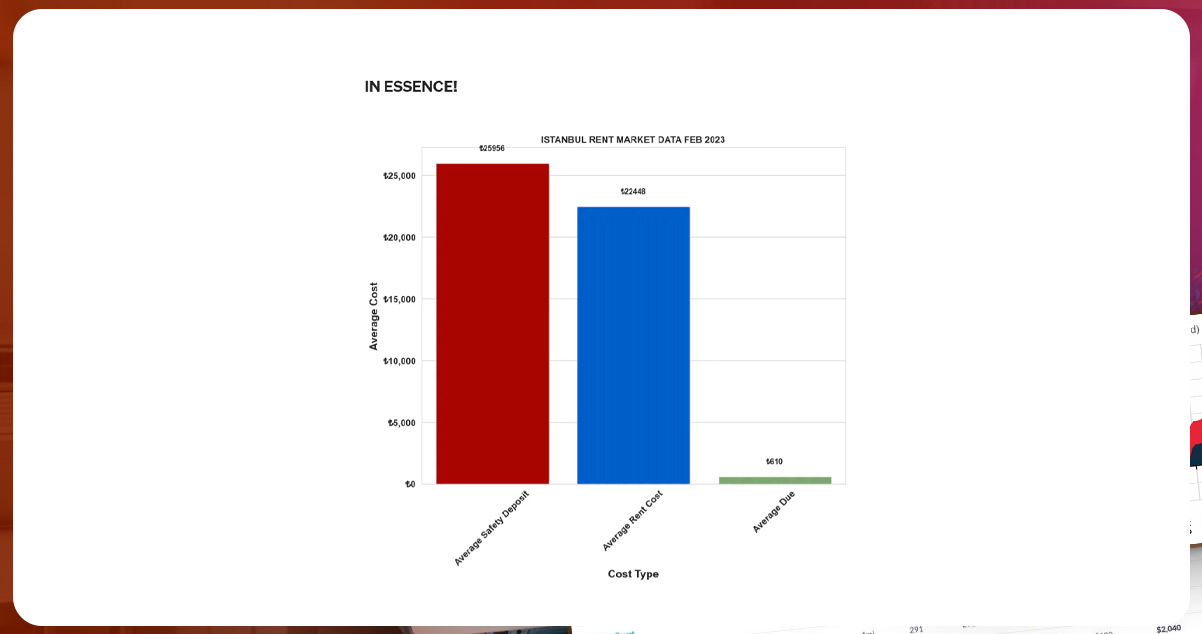

We decided to experiment with analyzing data on the rental flat market in Istanbul. Here, we

used our usual data scraping techniques to collect the data.

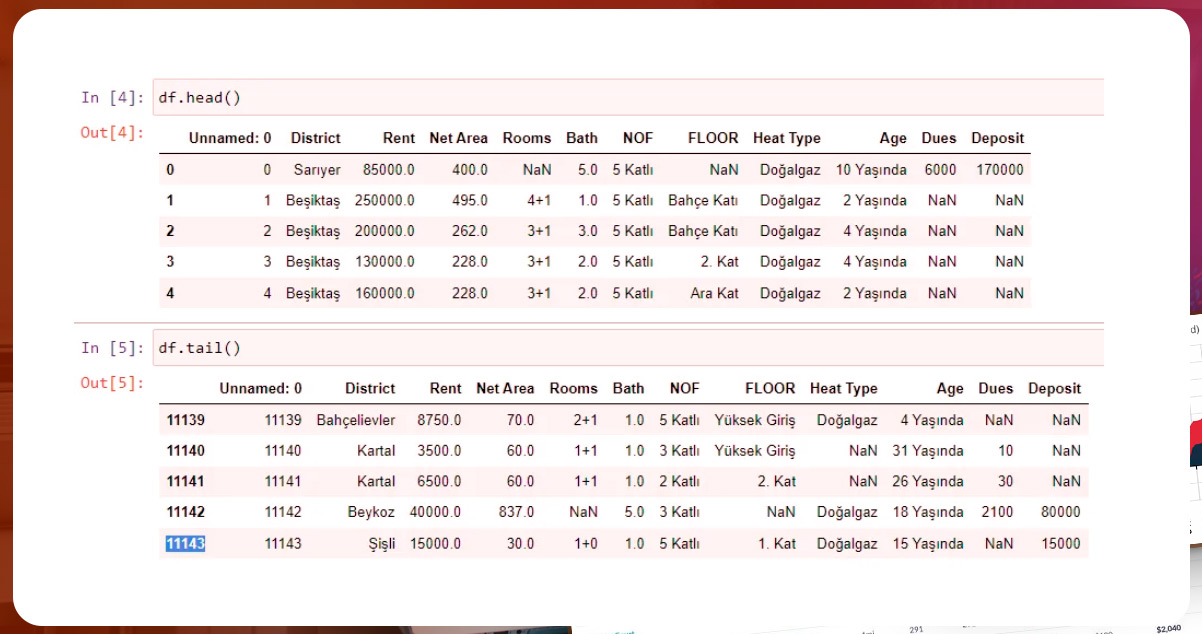

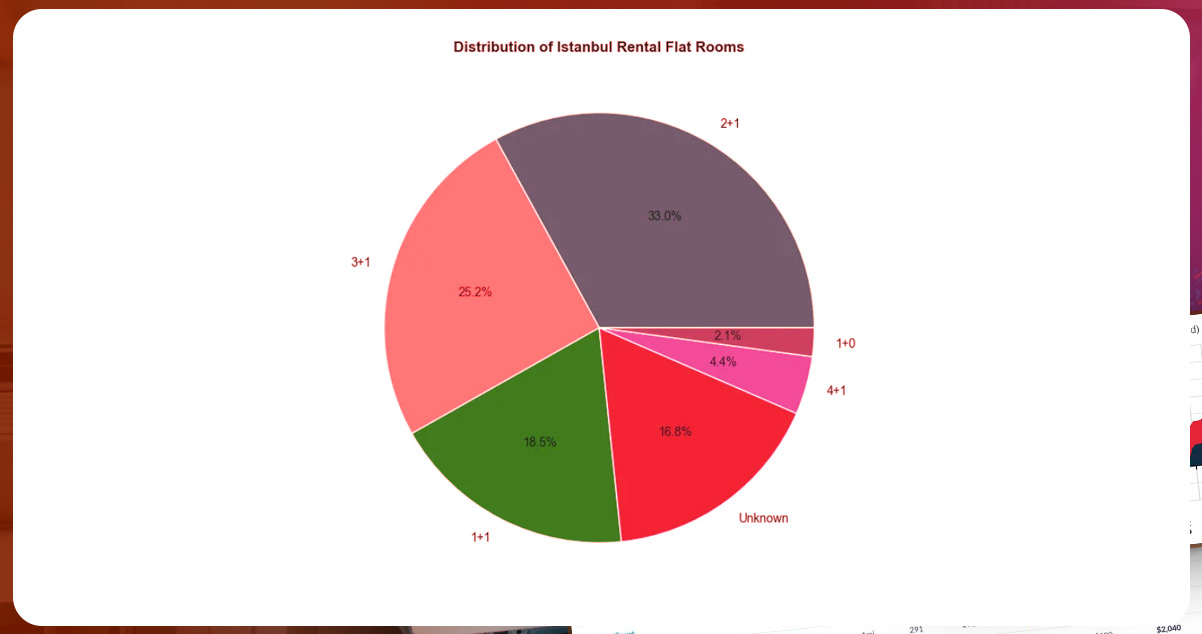

We scraped the data for around 13000 flats on rent. Here are some interesting visualizations

and figures after the EDA process.

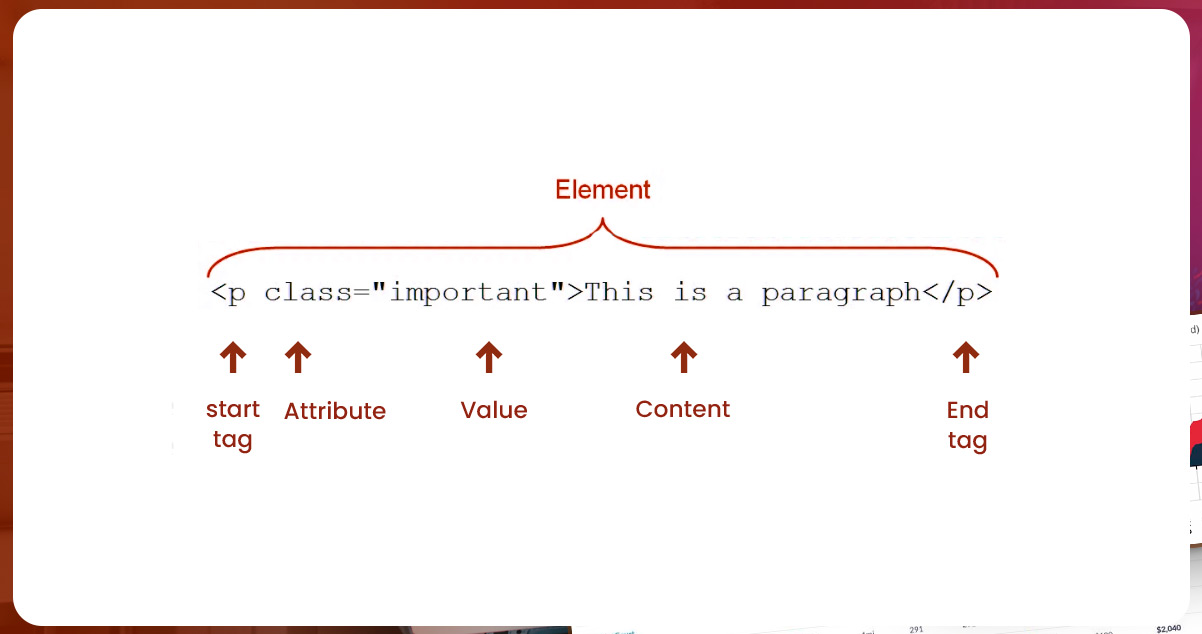

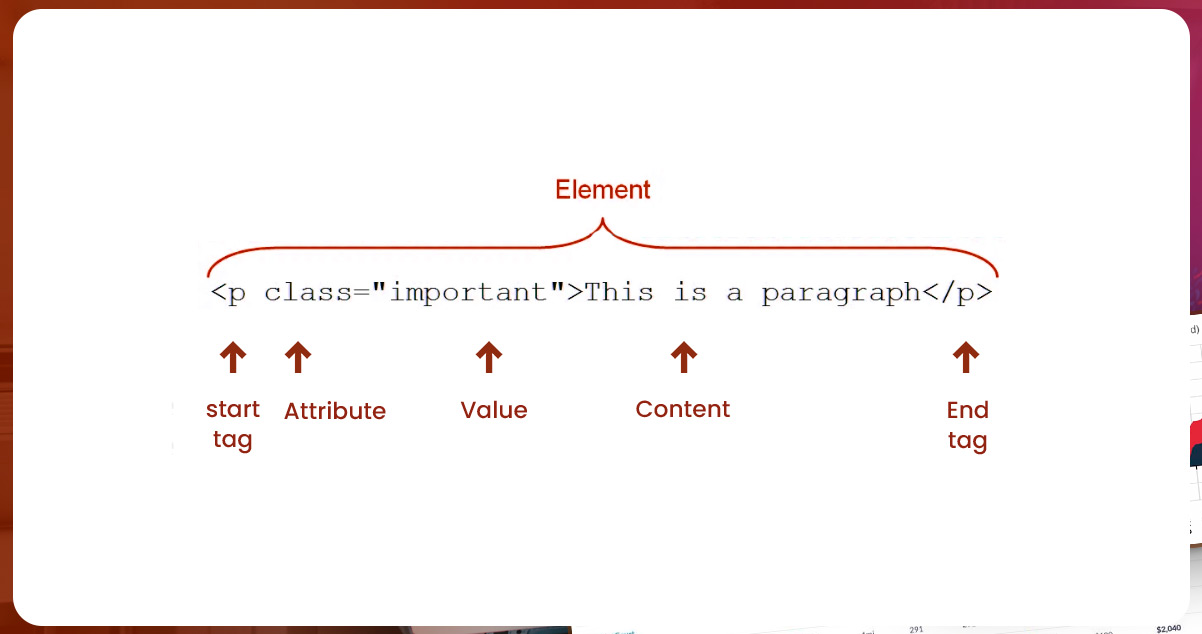

Introduction to HTML

Hypertext Markup Language helps make and structure web content. It consists of

essential data

for web scraping services, like images, text content, links, and other data fields that webpages use.

Applying the proper techniques and tools, you can scrape data from these web pages and parse

the data to compile the sheet, study trends, and make better business decisions. Knowing the

structure of HTML in detail plays a crucial role in web data extraction.

HTML attributes are vital for creating responsive, accessible, and well-formatted web pages.

HTML attributes give extra information related to its element, and you can add their

appearance, modify behavior, and add opening tags. Further, you can use details to specify

color, size, font, and other element features or share alt text, links, or additional metadata.

You

can also use attributes to define IDs and classes essential for script targeting and styling.

- class attribute: you can

use this to define class elements. You can use it to style CSS or

target JavaScript according to your needs.

- id attribute: It is

helpful to define a unique identifier to spot HTML elements is helpful.

You can use it to target JavaScript or identify fragments in the URL.

- src attribute: This

attribute defines the source URL for the media element, like the

image.

- href attribute: You can

use this to define the URL destination for a link element.

- alt attribute: Use this

attribute to give an alternative text to an image element. If the

image can't load, you can see the alt text on the screen.

- title attribute: Use this

to give a title to an HTML element.

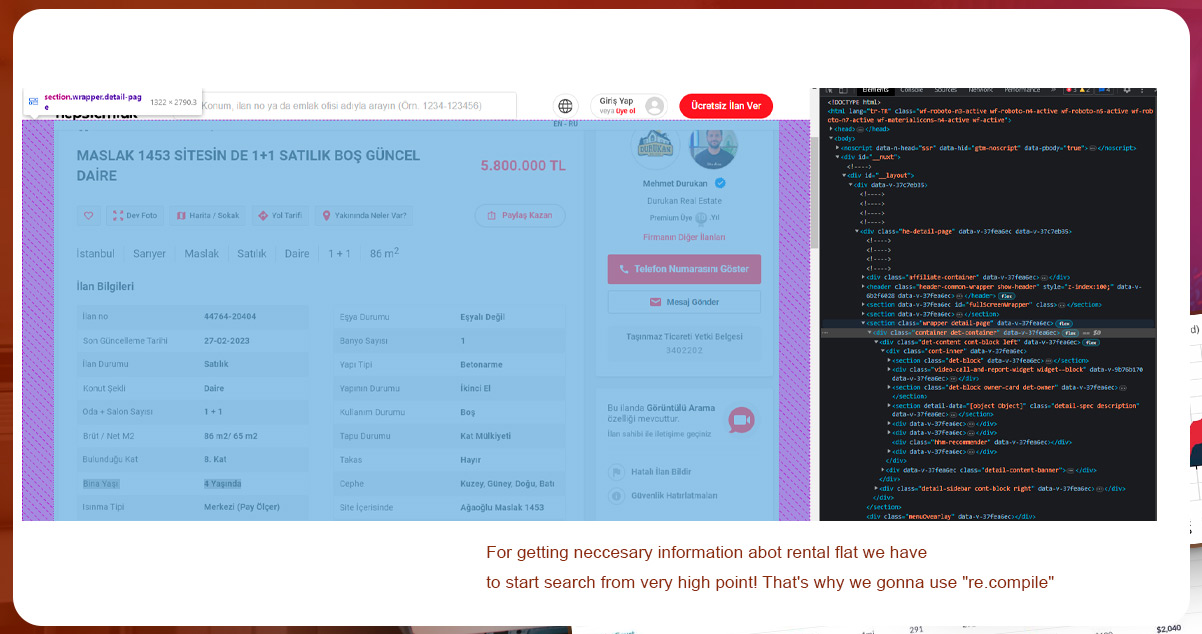

We need to uncover the HTML elements of the website. To do this, we'll use the Google

Chrome browser. Right-click and inspect your target.

While scraping web data, generally, we need class names and href links to explore the required

data. We will explain the example for this below.

Conclusion

Istanbul is a remarkable city. Consider it a center of Diversity, Businesses, and

Entertainment.

The Rental Flat Market is growing. Want to scrape flat rental data for Istanbul? Contact

Actowiz Solutions.